To mark the Day of Digital Archives I thought I would add a personal note about the “journey” I have made in the last two years. It was about this time in 2009 that it was announced that the AIMS Project was being funded by the Andrew W. Mellon Foundation and that I would be seconded from my post as Senior Archivist to that of Digital Archivist for the project.

At the time I had considerable experience of digitisation but very little about digital archives. So I began reading a few texts and following references and links to other sources of information until I had a pile of paper several inches high of things to read. At first there was a huge amount to take-in – new acronyms, especially from the frightening OAIS, and plenty of projects like the EU funded Planets initiative. It seemed that the learning would never stop – there was always another link to follow another article to read and it was really difficult to determine how much was making sense.

Talking to colleagues who were already active in this field also revealed how little digital media we actually had at the University of Hull Archives – just over two years ago we literally had a handful of digital media whilst others were already talking about terabytes of stuff. Fortunately the AIMS project sought to breakdown the workflow into four distinct sub-functions and placed emphasis on understanding the process compared to ‘traditional’ paper archives which reduced the sense of being overwhelmed by it all.

Since then I feel I have come along way – I have attended a large number of events and spoken at a fair few and quickly become both familiar and comfortable with the language. I do appreciate the time I have been able to dedicate solely to the issue of digital archives and that many colleagues are embracing this “challenge” without this luxury.

The biggest recommendation I can make is to start having a play with the software – many of the tools that we use at Hull University are free – Karen’s Directory Printer for creating a manifest of records including checksums that have been received; FTK Imager for disc images etc etc. Nor do you have to wait for digital archives or risk changing key metadata whilst you are experimenting – you can use any series of digital files or old media that are lurking in the way of a drawer. We have also created a forensic workstation and shared our experiences via this blog.

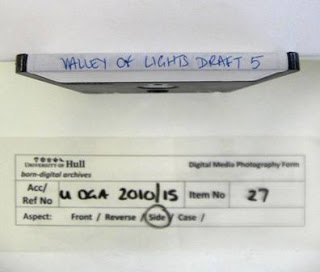

Once we had started to experiment, we created draft workflows and documentation and refined this as we experimented further – all tasks from photography of media to using write-blockers do become less daunting the more frequently you do them. Having learnt from many colleagues we have started to add content to the born-digital archives section of the History Centre website. I have also used some of my own email to play with the MUSE visualisation tool to understand how it might allow us to provide significantly enhanced access to this material in the future.

Although the project funding has now finished and I have returned to my “normal” job I do think that digital archives has now become part of my normal work and each depositor is now specifically asked about digital archives and in public tours of the building we explicitly mention the challenges and the opportunities of digital archives. We don’t have all of the answers yet – archiving e-mail in particular still scares me, but don’t feel as daunted as I did two years ago.